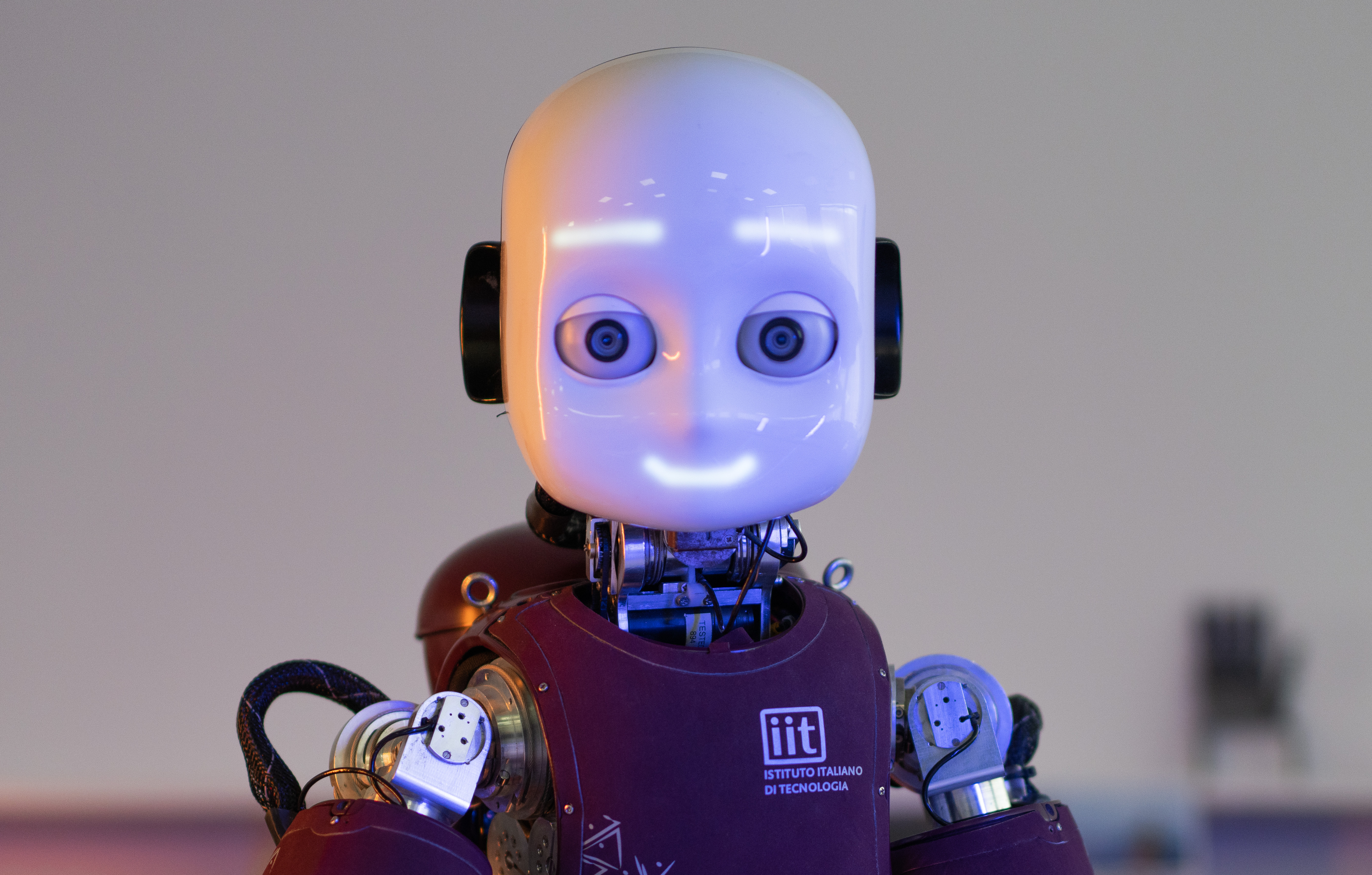

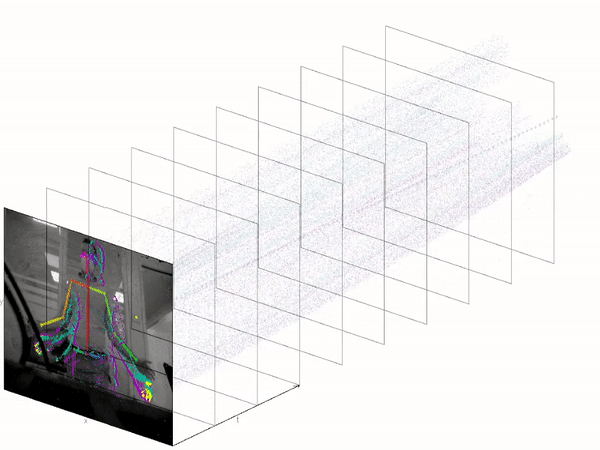

Robots need to be aware of human presence and actions for safety and to engage in collaborative actions. We want to leverage on the low-latency and high temporal resolution of event-cameras to detect and track human beings and infer their actions online. We couple auditory and visual perception to detect and localize speech, towards equipping the robot with the capability of selecting one person and following its speech.

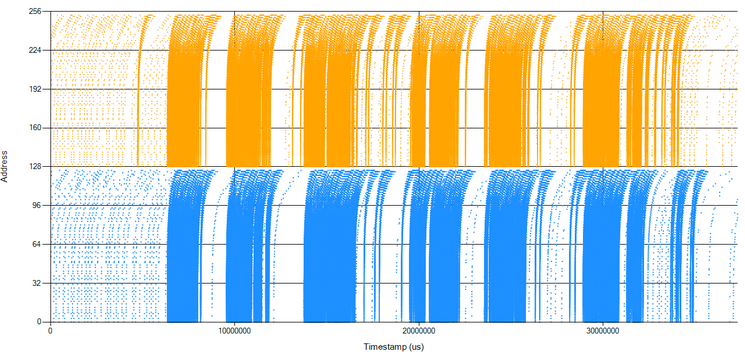

Methods: Methods: event-driven ML, implemented in SNN, event-driven vision (motion estimation and tracking)