July 10, 2023

9:00 - 13:00 (CEST)

Porto Antico, Genova, Italy

Abstract

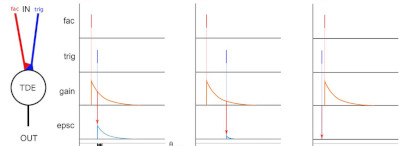

Fast and efficient detection of motion in the visual field or the localisation of sound sources are crucial for the survival reaction of autonomous agents acting in unconstrained scenarios. Both processes rely on the timing and the spatial information of sensory stimuli. Biology shows countless examples of neural structures exploiting temporal and spatial correlations of sensory stimuli to detect and estimate visual motion [1, 2], or sound sources (as in the barn owl [3]). These mechanisms inspired the design and implementation of the Time-Difference-Encoder (TDE) [4]. The TDE has been previously demonstrated in robotic applications for touch and auditory stimuli and visual motion detection [5, 6, 7, 8, 9, 10, 11]. The tutorial aims to introduce the TDE model in a simulated and controlled environment towards implementing a system working in a real-world scenario. The tutorial starts with talks introducing correlation detector models conceived from biology and abstracting such models with a neuromorphic approach, describing the TDE model and showing its applicability in various use cases. We will finally dive into a hands-on session to simulate the model with a shared Jupiter notebook and provided data from an event-driven vision sensor.

Motivation

Bioinspiration serves as a great example of solving robotic daily tasks. A robot exploring the world can take advantage of biological mechanisms to quickly react to external stimuli [12, 10, 13]. This tutorial focuses on biologically plausible applications to detect time-difference to encode different features such as visual or auditory cues [4, 14]. The emerging bioinspired event-based representation of the data as asynchronous events to encode sensory input benefits the latency of intelligent systems by reducing redundant data and computational load. This tutorial is strongly aligned with the research and understanding of living systems, especially exploiting the biological mechanisms of sensory encoding and signal communication, proposed as the focus of LM2023. The introduction of neuromorphic intelligent systems would bridge the gap between biologically inspired software and body, through biologically inspired hardware for computation, therefore widening the scope of the conference. In biology, sensing is an analogue process, not constrained by the temporal sampling rate and thus not providing redundant information, in the absence of a stimulus. On the other hand, providing a high dynamic range when a stimulus is applied or changes. Neuromorphic event-driven sensors aim to roughly mimic such behaviour by asynchronously sampling the environment and providing low-latency and power-efficient information. These sensors have been realised for vision, touch, olfaction and audition. In this tutorial, the focus on the application side lies in auditory and vision sensors, futher introduced in this context. Asynchronous bioinspired event-based sensing and the corresponding perception strategies inherently reduce redundancy, computation and latency, consequently improving a robot’s capability to appropriately and timely react to its sensory inputs. Living Machine 2023 focuses on biomimetic robots, computers, and active biomimetic materials and structures. In this regard, our bioinspired sensory processing tutorial proposes a complementary approach to LM2023 systems. LM2023 is an exciting opportunity to broadcast our work and cross-fertilise the neuromorphic and living machine communities, creating interdisciplinary bonds.